Currently, ADF doesn’t support XML natively. But

- You may write your own code and then use custom activity of ADF.

- SSIS has built-in support for XML as a source. Maybe you could take a look.

Xscode

xscode.com › transforming-xml-data-with-azure-data-factory-xml-to-parquet-and-sql

Transforming XML Data with Azure Data Factory: XML to Parquet and SQL

Define the XML Schema:Import the ... like Filter, Aggregate, or Derived Column to shape the data as needed · Choose the Sink transformation and configure it to write to Parquet format · Specify the output location in either Azure Data Lake or Blob Storage...

Videos

17:43

Azure Data Factory Quick Tip: Transfer Data From XML Document to ...

06:45

#55. Azure Data Factory - Parse XML file and load to Blob storage ...

52:15

ADF Inline Datasets: XML, XLSX, Delta, CDM - YouTube

47:15

Azure Data Factory Inline Datasets. Working with XML, XLSX, Delta ...

26:06

Parquet Format in Azure Data Factory | Using Self Hosted IR In ...

03:57

How to Convert Parquet File to CSV File in Azure Data Factory | ...

How to Create parquet file from SQL Table data

Simply make use of copyData activity in a pipeline and provide the details of source (i.e. which Table to refer) and sink (i.e. ADLS Gen 2 folder location).

tech-findings.com

tech-findings.com › home › azure data factory › getting started with adf - creating and loading data in parquet file from sql tables dynamically

Getting started with ADF - Creating and Loading data in parquet ...

What is Parquet file format

Parquet is structured, column-oriented (also called columnar storage), compressed, binary file format.

tech-findings.com

tech-findings.com › home › azure data factory › getting started with adf - creating and loading data in parquet file from sql tables dynamically

Getting started with ADF - Creating and Loading data in parquet ...

What is item in Foreach in adf

Item is current object/record of the ForEach items array.

tech-findings.com

tech-findings.com › home › azure data factory › getting started with adf - creating and loading data in parquet file from sql tables dynamically

Getting started with ADF - Creating and Loading data in parquet ...

Top answer 1 of 3

2

Currently, ADF doesn’t support XML natively. But

- You may write your own code and then use custom activity of ADF.

- SSIS has built-in support for XML as a source. Maybe you could take a look.

2 of 3

2

For that case you have to use some custom code to do this. I would choose from these options

- Azure Functions - only for some simple data processing

- Azure Databricks - in the case you need to process some big XML data

Microsoft Learn

learn.microsoft.com › en-us › azure › data-factory › supported-file-formats-and-compression-codecs

Supported file formats by copy activity in Azure Data Factory - Azure Data Factory & Azure Synapse | Microsoft Learn

February 13, 2025 - Azure Data Factory supports the following file formats. Refer to each article for format-based settings. Avro format · Binary format · Delimited text format · Excel format · JSON format · ORC format · Parquet format · XML format · You can use the Copy activity to copy files as-is between two file-based data stores, in which case the data is copied efficiently without any serialization or deserialization.

Stack Overflow

stackoverflow.com › questions › 72547903 › azure-data-factory-run-script-on-parquet-files-and-output-as-parquet-files

Azure Data Factory - run script on parquet files and output as parquet files - Stack Overflow

Bring the best of human thought and AI automation together at your work. Explore Stack Internal ... In Azure Data Factory I have a pipeline, created from the built-in copy data task, that copies data from 12 entities (campaign, lead, contact etc.) from Dynamics CRM (using a linked service) and outputs the contents as parquet files in account storage.

Stack Overflow

stackoverflow.com › questions › 77623842 › how-to-flatten-an-xml-file-in-an-azure-data-flow-when-the-source-is-dynamic

How to flatten an XML file in an azure data flow when the source is dynamic - Stack Overflow

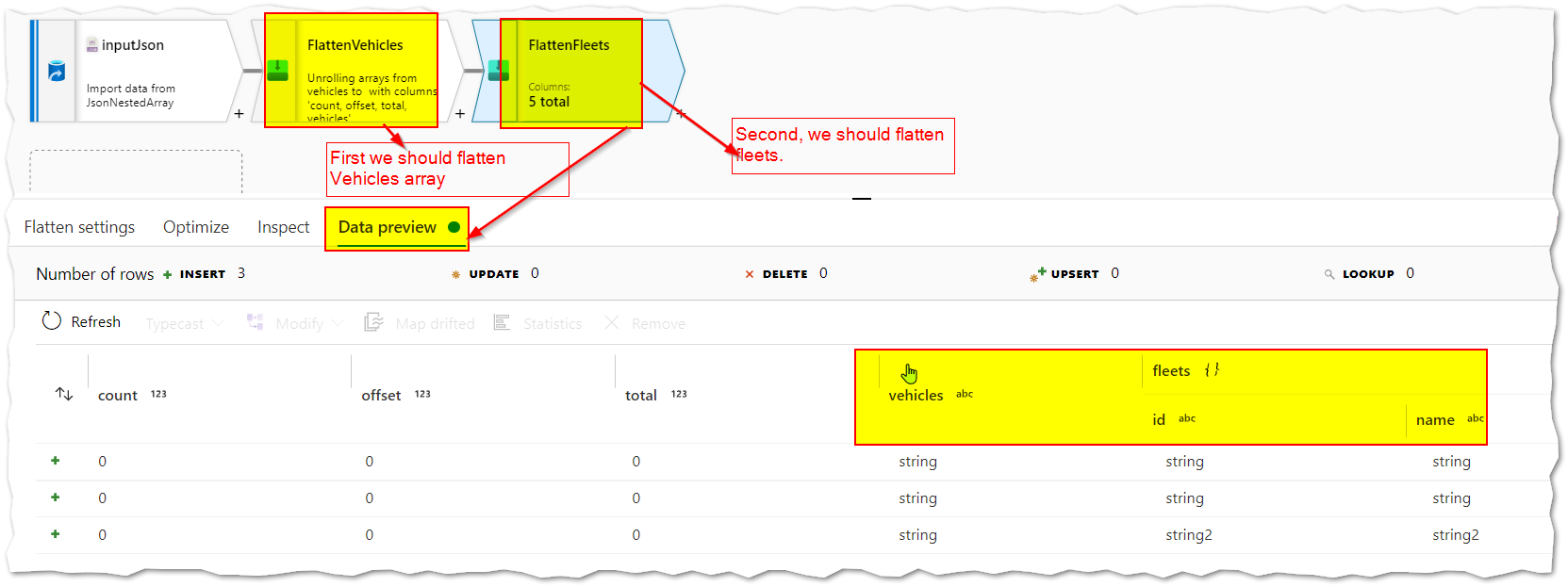

I am creating an Azure Synapse pipeline that is utilizing a data flow which is attempting to transform a pretty complex xml file into a parquet file. I am doing this by flattening the xml from different points so that I can export each array into its own parquet file.

Microsoft Learn

learn.microsoft.com › en-us › azure › data-factory › format-xml

XML format - Azure Data Factory & Azure Synapse | Microsoft Learn

May 15, 2024 - When previewing XML files, data is shown with JSON hierarchy, and you use JSON path to point to the fields. The following properties are supported in the copy activity *source* section. Learn more from XML connector behavior. ... In mapping data flows, you can read XML format in the following data stores: Azure Blob Storage, Azure Data Lake Storage Gen1, Azure Data Lake Storage Gen2, Amazon S3 and SFTP.

LinkedIn

linkedin.com › pulse › building-end-to-end-pipelines-writing-parquet-files-azure-sain-yc39c

Building End-to-End Pipelines for Writing Parquet Files ...

We cannot provide a description for this page right now

Microsoft Learn

learn.microsoft.com › en-us › azure › data-factory › tutorial-data-flow-write-to-lake

Best practices for writing to files to data lake with data flows - Azure Data Factory | Microsoft Learn

May 15, 2024 - If you're new to Azure Data Factory, see Introduction to Azure Data Factory. In this tutorial, you'll learn best practices that can be applied when writing files to ADLS Gen2 or Azure Blob Storage using data flows. You'll need access to an Azure Blob Storage Account or Azure Data Lake Store Gen2 account for reading a parquet file and then storing the results in folders.

Microsoft Learn

learn.microsoft.com › en-us › fabric › data-factory › format-parquet

How to configure Parquet format in the pipeline of Data Factory in Microsoft Fabric - Microsoft Fabric | Microsoft Learn

October 13, 2025 - This article explains how to configure Parquet format in the pipeline of Data Factory in Microsoft Fabric.

Azure Docs

docs.azure.cn › en-us › data-factory › connector-troubleshoot-parquet

Troubleshoot the Parquet format connector - Azure Data Factory & Azure Synapse | Azure Docs

Message: Column %column; does not exist in Parquet file. Cause: The source schema is a mismatch with the sink schema. Recommendation: Check the mappings in the activity. Make sure that the source column can be mapped to the correct sink column. Message: Incorrect format of %srcValue; for converting to %dstType;. Cause: The data can't be converted into the type that's specified in mappings.source.

Microsoft Learn

learn.microsoft.com › en-us › answers › questions › 41509 › azure-data-factory-convert-excel-file-copied-from

Azure data factory convert excel file copied from Sharepoint online to parquet - Microsoft Q&A

June 30, 2020 - Read more here : https://learn.microsoft.com/en-us/azure/data-factory/format-excel · Hence, you can convert the copied excel to parquet format by having another copy activity to copy data from the xls files in your ADLS Gen1, to a parquet dataset which can still be on the same ADLS Gen1.

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how