You are referring to the problem of Multiple Kernel Learning (MKL). Where you can train different kernels for different groups of features. I have used this in a multi-modal case, where I wanted different kernels for image and text.

I am not sure if you actually can do it via scikit-learn.

There are some libraries provided on GitHub, for example, this one: https://github.com/IvanoLauriola/MKLpy1

Hopefully, it can help you to achieve your goal.

Answer from alift on Stack OverflowVideos

You are referring to the problem of Multiple Kernel Learning (MKL). Where you can train different kernels for different groups of features. I have used this in a multi-modal case, where I wanted different kernels for image and text.

I am not sure if you actually can do it via scikit-learn.

There are some libraries provided on GitHub, for example, this one: https://github.com/IvanoLauriola/MKLpy1

Hopefully, it can help you to achieve your goal.

Multiple kernel learning is possible in sklearn. Just specify kernel='precomputed' and then pass the kernel matrix you want to use to fit.

Suppose your kernel matrix is the sum of two other kernel matrices. You can compute K1 and K2 however you like and use SVC.fit(X=K1 + K2, y=y).

The linear kernel is what you would expect, a linear model. I believe that the polynomial kernel is similar, but the boundary is of some defined but arbitrary order

(e.g. order 3: $ a= b_1 + b_2 \cdot X + b_3 \cdot X^2 + b_4 \cdot X^3$).

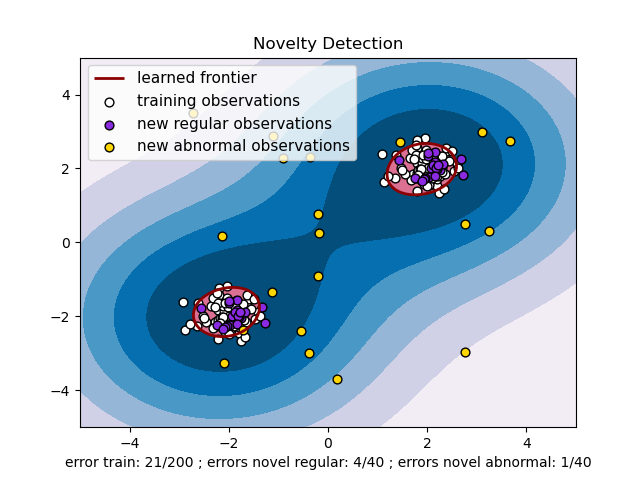

RBF uses normal curves around the data points, and sums these so that the decision boundary can be defined by a type of topology condition such as curves where the sum is above a value of 0.5. (see this picture )

I am not certain what the sigmoid kernel is, unless it is similar to the logistic regression model where a logistic function is used to define curves according to where the logistic value is greater than some value (modeling probability), such as 0.5 like the normal case.

Relying on basic knowledge of reader about kernels.

Linear Kernel: $K(X, Y) = X^T Y$

Polynomial kernel: $K(X, Y) = (γ\cdot X^T Y + r)^d , γ > 0$

Radial basis function (RBF) Kernel: $K(X, Y) = \exp(\|X-Y\|^2/2σ^2)$ which in simple form can be written as $\exp(-γ \cdot \|X - Y\|^2), γ > 0$

Sigmoid Kernel: $K(X, Y) = \tanh(γ\cdot X^TY + r) $ which is similar to the sigmoid function in logistic regression.

Here $r$, $d$, and $γ$ are kernel parameters.