Videos

Here's a simplified description. DE is an optimisation technique which iteratively modifies a population of candidate solutions to make it converge to an optimum of your function.

You first initialise your candidate solutions randomly. Then at each iteration and for each candidate solution x you do the following:

- you produce a trial vector: v = a + ( b - c ) / 2, where a, b, c are three distinct candidate solutions picked randomly among your population.

- you randomly swap vector components between x and v to produce v'. At least one component from v must be swapped.

- you replace x in your population with v' only if it is a better candidate (i.e. it better optimise your function).

(Note that the above algorithm is very simplified; don't code from it, find proper spec. elsewhere instead)

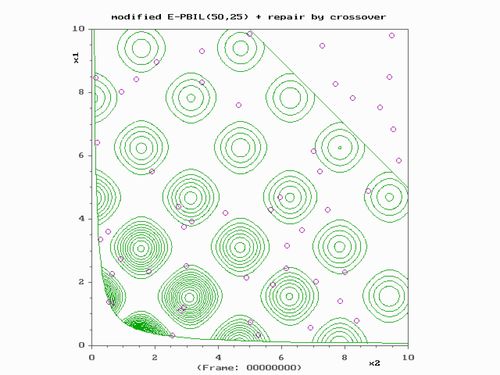

Unfortunately the Wikipedia article lacks illustrations. It is easier to understand with a graphical representation, you'll find some in these slides: http://www-personal.une.edu.au/~jvanderw/DE_1.pdf .

It is similar to genetic algorithm (GA) except that the candidate solutions are not considered as binary strings (chromosome) but (usually) as real vectors. One key aspect of DE is that the mutation step size (see step 1 for the mutation) is dynamic, that is, it adapts to the configuration of your population and will tend to zero when it converges. This makes DE less vulnerable to genetic drift than GA.

Answering my own question...

Overview

- The principal difference between Genetic Algorithms and Differential Evolution (DE) is that Genetic Algorithms rely on crossover while evolutionary strategies use mutation as the primary search mechanism.

- DE generates new candidates by adding a weighted difference between two population members to a third member (more on this below).

- If the resulting candidate is superior to the candidate with which it was compared, it replaces it; otherwise, the original candidate remains unchanged.

Definitions

- The population is made up of

NPcandidates. Xi= A parent candidate at indexi(indexes range from0toNP-1) from the current generation. Also known as the target vector.- Each candidate contains

Dparameters. Xi(j)= The jth parameter in candidateXi.Xa,Xb,Xc= three random parent candidates.- Difference vector =

(Xb - Xa) F= A weight that determines the rate of the population's evolution.- Ideal values: [0.5, 1.0]

CR= The probability of crossover taking place.- Range: [0, 1]

Xc`= A mutant vector obtained through the differential mutation operation. Also known as the donor vector.Xt= The child ofXiandXc`. Also known as the trial vector.

Algorithm

- For each candidate in the population

for (int i = 0; i<NP; ++i)

- Choose three distinct parents at random (they must differ from each other and

i)

do

{

a = random.nextInt(NP);

} while (a == i)

do

{

b = random.nextInt(NP);

} while (b == i || b == a);

do

{

c = random.nextInt(NP);

} while (c == i || c == b || c == a);

- (Mutation step) Add a weighted difference vector between two population members to a third member

Xc` = Xc + F * (Xb - Xa)

- (Crossover step) For every variable in

Xi, apply uniform crossover with probabilityCRto inherit fromXc`; otherwise, inherit fromXi. At least one variable must be inherited fromXc`

int R = random.nextInt(D);

for (int j=0; j < D; ++j)

{

double probability = random.nextDouble();

if (probability < CR || j == R)

Xt[j] = Xc`[j]

else

Xt[j] = Xi[j]

}

- (Selection step) If

Xtis superior toXithenXtreplacesXiin the next generation. Otherwise,Xiis kept unmodified.

Resources

- See this for an overview of the terminology

- See Optimization Using Differential Evolution by Vasan Arunachalam for an explanation of the Differential Evolution algorithm

- See Evolution: A Survey of the State-of-the-Art by Swagatam Das and Ponnuthurai Nagaratnam Suganthan for different variants of the Differential Evolution algorithm

- See Differential Evolution Optimization from Scratch with Python for a detailed description of an implementation of a DE algorithm in python.