Starting with ML, need help understanding Gradient Descent.

There’s a couple parts to your question here...

But first, I want to suggest you go back through those first lectures on gradient descent and really try to internalize and understand the concept. I will try to describe the what/how/why of it here to give you fresh eyes to look at it.

So to answer the what/how/why in your question...

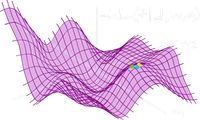

Imagine standing at the top of a mountain and you want to find the fastest pathway down. There are marker flags on the mountain that show the elevation down the slope. One way to find the fastest way down is to take a ball, roll it down the hill, then chase after it. Sure, the ball will bounce around a bit but gravity will ensure that the ball more or less follows the fastest path down the slope.

Gradient Descent is that ball.

As the ball rolls down the hill it’s elevation constantly changes. As it passes the marker flags you know exactly what the elevation is, but when the ball is in between, you have to estimate.

The marker flags are your data points.

You might not know what the terrain is doing exactly between the markers but you know there is ground there, so you can make an estimate of the ball’s elevation. If the ball is half way between the 100m and 200m markers, you’ll probably be safe to guess the elevation of that ball is 150m.

This is interpolation. This is how you get the values in between your datapoints.

So why is this important...

Gradient Descent is an optimization algorithm. It is a ball that will find it’s way downhill and will generally do so quite fast. This is the tool (or rather, one of the tools) you need to implement in order for your much larger machine learning algorithm to learn and get better at its task. To train your algorithm it needs a way to know that it’s getting better so it can promote behaviours that make it better and punish those that don’t. However, instead of the program thinking “how do I get better?” You actually want it thinking “how can I be less bad?”

Or “How can I minimize error?”.

When training your algorithm you want to find the fastest pathway down that error mountain to be at the lowest error as fast as possible. You need a ball to do that. Gradient Descent is that ball. This is why it is so important to understand how to use that ball.

More on reddit.comKeep it simple! How to understand Gradient Descent algorithm

Can someone eli5 gradient descent and back-propagation

1 - you're basically on the right track, it's the graph of loss with respect to all the weights and biases. And yes, this can be an ungodly large number of dimensions. One dimension for every parameter you can change.

3 - neurons don't have one weight, they have a bunch. For a simple feed forward network, each neuron has one bias weight, and then one weight for every neuron in the previous layer. So if you have N neurons in layer L, and M neurons in layer L-1, then you have N * (M+1) weights in layer L (each neuron has one weight for each neuron in the previous layer, plus a bias... so each neuron has M + 1 weights and there are N neurons, so N * (M+1) total).

4 - you can definitely approximate the gradient by taking a few small steps, seeing what happens, and then using that information to calculate an approximate gradient. If you've taken Ng's class, he mentions that actually as a way to test your backprop, since your manual calculation should have roughly the same answer as the calculus based way to calculate the gradient. It's like this... how do you calculate the derivative of x2 at x=2? You have two options:

f(x) = x2

f'(x) = 2x

derivative at x=2 is: f'(2) = 4

that's the calculus way. You can also do it manually:

f(2 + e) - f(2))/e

you can use a calculator if you like:

((2 + .00001)2 - 22 )/.00001

comes out to about 4. The smaller you make e, the closer to 4 it gets.

Your idea is basically that last option, pick some small shift, see what happens, find the the difference, that gives you some information about your gradient. It's a little more complicated in multiple dimensions, but not by much... the gradient operator is pretty simple thankfully.

The 'real' way to do it though... that's a calc 3 question.

Listen... real talk. I failed calc 3 the first time I took it. I didn't like my teacher, I didn't know how it applied, I was busy with my coding classes, etc. Then I took physics 300 and coded up all this cool stuff... D&D gelatinous cubes, rope bridges, suddenly this seemingly useless calc 3 stuff became incredibly important to me. If you care to actually understand backprop, you must get a very high level of comfort with what exactly a gradient is and how to calculate it, full stop. You need to move on from thinking 'about' the gradient and the chain rule and stuff, and learn to think 'with' it instead. If you have any hesitation or confusion on these low level ideas, you'll never be able to compose them into the crazy bigger picture ideas you're trying to grapple with, you know? It's like trying to learn algebra before you know how to add. So: anything less than a proper mathematical understanding of the gradient will leave you crippled in your efforts. For a little extra inspiration, read this article by Michael Nielson. Thought as technology... calc 3 is a thought technology. It's a tool. It will be annoyingly time consuming to properly acquire this tool, but a hunter takes care of his weapons. You can't head out under the blood moon without taking the time to sharpen your axe. If you do, you're going to get your shit kicked in.

Two years ago, I had no knowledge of stats. At all. Never took a class. I wanted to understand machine learning. I had a choice... I could either watch a bunch of Siraj and read some medium articles and hope I'd eventually 'get it', or I could arm up proper. I bought a copy of Hogg and Craig's 'mathematical statistics'. In hindsight I could have picked a better book, and it was a massive time investment... took me 8 months of daily work, but I did it. I wouldn't say I've mastered statistics yet, but I'm very comfortable with huge chunks of it, it's been immensely useful. I'd even say it's changed my life in surprising ways... stats is a huge paradigm shift. But my point, don't let yourself be limited by your calc 3 experience. School hasn't even been where I've done my best learning, you're better off learning to take control of your own journey. We all eventually have to become autodidacts if you're serious about keeping up with this field, no time like the present to get into the habit. If you really care to understand this stuff, get serious about it. Pick a MOOC, pick a textbook, hire a tutor, audit a class at a local community college, go through a course in brilliant.org... it seriously don't matter what you do, so long as you pick a (real, not fluffy) path to mastery, and you stick with it until it's done. Go in 6 week sprints. Take a few days to pick your path, schedule daily time, and then every day put your time in on the study plan you picked. I don't finish everything I start (I have a lambda calculus text I might not get back to) but you at least need to have a planned period of focus before re-evaluating... the long term work is what really improves you as a practitioner, you never get up a head of steam without a proper ritual and a proper plan. Especially pay attention to exercises... for every hour I spend reading, I try and spend at least an hour or two solving. The gradient is seriously not hard to understand, I'm talking this up a ton, but in all reality, a decent resource will get you functional in like... 10 hours, if that, so this doesn't need to be a 6 month detour (unless you want to give calc 3 the finger and blow through Strang's just to prove to yourself you can). For a comfortable level of understanding though, two weeks of daily work and you won't have to wonder, you'll know what the gradient is, you'll have calculated it on dozens of functions, you'll have used it to solve dozens of problems, and you'll have see it visualized on a number of graphs. Your brain will pick out the patterns, soon you'll 'see'. Once seen, it can never be unseen. (geez, apparently I have bloodborne on the brain).

If you decide to seriously understand ML by the way, you will need the gradient all over the place. Things like Lagrangian multipliers and calculus of variations will be completely incomprehensible without a strong foundation in calc, and that stuff does come up if you want to rigorously ground yourself in the theory.

6 - for your last point, and you'll see why I saved this for last... I don't expect you to fully understand my answer. You will need to come back to this after your calc adventure, then you'll be able to get it I think. But just in case... no, you calculate the gradient all at once. There is one gradient, with as many dimensions as there are free parameters. The reason it 'feels' like you're breaking it into chunks, is because your gradient gives you an equation with respect to a given parameter wL _i,j . Once you understand the gradient, you can write it down, and you'll see there's a chunk from your last layer that appears in every layer further down... and that chunk composes with a chunk in layer two that appears in every layer later down... and so on. You'll see it pretty naturally once you have your calc down I think.

I'm afraid I don't have a good suggestion for getting up to speed with calc. I liked Alcock's 'how to think about analysis' as a primer for getting into math study (I highly recommend it), and it does ground differentiating and integration in a way that you might feel really illuminating... don't know why they don't always teach calc like this, but whatever. For your real work though of properly grounding your understand of the gradient and the chain rule (and how to think about high dimensional spaces in general) I don't have a good suggestion... maybe do a search on r/math, that's my go-to whenever I'm looking for textbook suggestions. There's all kinds of threads talking about all kinds of good resources, you'll definitely find something that fits. Then you'll have my problem, haha... too many powerful new ways of thinking to learn, too many amazing books, not enough hours in the day. I'm 100% convinced now... anyone serious about this stuff should spend a fairly significant amount of their time honing their math abilities, it's a huge learning accelerator, it's crazy. After two years of study, backprop looks so easy and obvious, you'll never get that insight without working on your fundamentals. I did it, you can do it too. You don't need to hit it nearly as hard as I did even (I'm trying to push up into some gnarly research papers) but for backprop and calc 3? You got this. You just have to roll up your sleeves, set down the lightweight youtube videos, and get really serious. Absolutely nothing will hold you back if you focus your attention on one spot for long enough, you know? If you get stuck, it's usually just because you're missing foundational pieces... well, here they are. Remember this too... there will be concepts in the future that you struggle with where your 'missing foundational pieces' are much less clear. There you've got extra work to do to figure out where you need to backtrack. But you'll get those too when they come. It all comes eventually.

More on reddit.comGradient Descent: All You Need to Know

Good article, although I did not quite like this statement:

"Even the formulas for the gradients for each cost function can be found online without knowing how to derive them yourself."

Yes it's true, but is that the goal just plug in some formulas?

More on reddit.com