How does gradient descent know which values to pick next?

I'm not exactly sure this is what you mean, but in practice it can be left to your discretion.

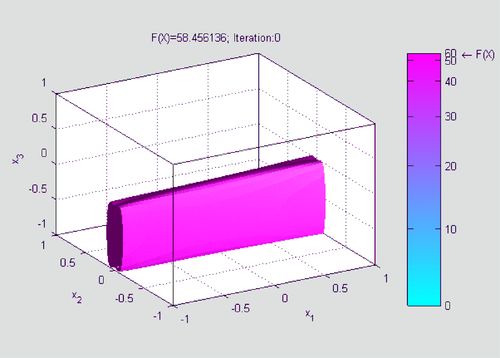

You can recompute the derivatives for all of the parameters at every iteration, or you can use approximations. In particular, if the target function is not a nice clean function like f(x,y,z), I typically just find partial derivatives for x, y, and z and pick the ones which will decrease the target by the most. If the function is convex it shouldn't typically impact the time by that much. If it's a clean function, you can compute the derivatives analytically.

Not sure if that's what you were asking though.

More on reddit.com[D] An Introduction to Gradient Descent

Gradient descent can be difficult to understand but I found that if you take small steps in the right direction you'll end up where you want to be.

More on reddit.comELI5: Gradient Descent Algorithm

Imagine you're stranded in a mountainous area, and you're blindfolded. You'll be rescued if you reach the lowest point in a valley. Your only knowledge of your immediate surroundings comes from placing your foot one step away from yourself, and estimating which direction takes you the furthest downward. That's it basically, and it's quite accurate for a simple GD problem in 3 dimensions. It can be further complicated if there are multiple valleys, and real problems will usually have more dimensions (so just replace "checking the direction that goes downward the most" with "taking a derivative and choosing the direction that minimizes it". I'm a bit rusty on this topic but I think that's accurate enough to get the idea.

More on reddit.com