For SVM, it's important to have the same scaling for all features and normally it is done through scaling the values in each (column) feature such that the mean is 0 and variance is 1. Another way is to scale it such that the min and max are for example 0 and 1. However, there isn't any difference between [0, 1] and [0, 10]. Both will show the same performance.

If you insist on using SVM for classification, another way that may result in improvement is ensembling multiple SVM. In case you are using Python, you can try BaggingClassifier from sklearn.ensemble.

Also notice that you can't expect to get any performance from a real set of training data. I think 97% is a very good performance. It is possible that you overfit the data if you go higher than this.

Answer from Mahsa.Ghasemi on Stack Overflowmachine learning - Techniques to improve the accuracy of SVM classifier - Stack Overflow

scikit learn - Making SVM run faster in python - Stack Overflow

How to implement Incremental Learning for Support Vector Classifier in ML?

Look into extending online learning. If you are using a linear SVM, you can. Save the weights learned and learn. Basically your goal is transfer learning and online learning bits.

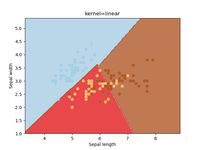

More on reddit.comHow to draw the decision boundary of SVM

Videos

For SVM, it's important to have the same scaling for all features and normally it is done through scaling the values in each (column) feature such that the mean is 0 and variance is 1. Another way is to scale it such that the min and max are for example 0 and 1. However, there isn't any difference between [0, 1] and [0, 10]. Both will show the same performance.

If you insist on using SVM for classification, another way that may result in improvement is ensembling multiple SVM. In case you are using Python, you can try BaggingClassifier from sklearn.ensemble.

Also notice that you can't expect to get any performance from a real set of training data. I think 97% is a very good performance. It is possible that you overfit the data if you go higher than this.

some thoughts that have come to my mind when reading your question and the arguments you putting forward with this author claiming to have achieved acc=99.51%. My first thought was OVERFITTING. I can be wrong, because it might depend on the dataset - But the first thought will be overfitting. Now my questions;

1- Has the author in his article stated whether the dataset was split into training and testing set? 2- Is this acc = 99.51% achieved with the training set or the testing one?

With the training set you can hit this acc = 99.51% when your model is overfitting. Generally, in this case the performance of the SVM classifier on unknown dataset is poor.

If you want to stick with SVC as much as possible and train on the full dataset, you can use ensembles of SVCs that are trained on subsets of the data to reduce the number of records per classifier (which apparently has quadratic influence on complexity). Scikit supports that with the BaggingClassifier wrapper. That should give you similar (if not better) accuracy compared to a single classifier, with much less training time. The training of the individual classifiers can also be set to run in parallel using the n_jobs parameter.

Alternatively, I would also consider using a Random Forest classifier - it supports multi-class classification natively, it is fast and gives pretty good probability estimates when min_samples_leaf is set appropriately.

I did a quick tests on the iris dataset blown up 100 times with an ensemble of 10 SVCs, each one trained on 10% of the data. It is more than 10 times faster than a single classifier. These are the numbers I got on my laptop:

Single SVC: 45s

Ensemble SVC: 3s

Random Forest Classifier: 0.5s

See below the code that I used to produce the numbers:

import time

import numpy as np

from sklearn.ensemble import BaggingClassifier, RandomForestClassifier

from sklearn import datasets

from sklearn.multiclass import OneVsRestClassifier

from sklearn.svm import SVC

iris = datasets.load_iris()

X, y = iris.data, iris.target

X = np.repeat(X, 100, axis=0)

y = np.repeat(y, 100, axis=0)

start = time.time()

clf = OneVsRestClassifier(SVC(kernel='linear', probability=True, class_weight='auto'))

clf.fit(X, y)

end = time.time()

print "Single SVC", end - start, clf.score(X,y)

proba = clf.predict_proba(X)

n_estimators = 10

start = time.time()

clf = OneVsRestClassifier(BaggingClassifier(SVC(kernel='linear', probability=True, class_weight='auto'), max_samples=1.0 / n_estimators, n_estimators=n_estimators))

clf.fit(X, y)

end = time.time()

print "Bagging SVC", end - start, clf.score(X,y)

proba = clf.predict_proba(X)

start = time.time()

clf = RandomForestClassifier(min_samples_leaf=20)

clf.fit(X, y)

end = time.time()

print "Random Forest", end - start, clf.score(X,y)

proba = clf.predict_proba(X)

If you want to make sure that each record is used only once for training in the BaggingClassifier, you can set the bootstrap parameter to False.

SVM classifiers don't scale so easily. From the docs, about the complexity of sklearn.svm.SVC.

The fit time complexity is more than quadratic with the number of samples which makes it hard to scale to dataset with more than a couple of 10000 samples.

In scikit-learn you have svm.linearSVC which can scale better.

Apparently it could be able to handle your data.

Alternatively you could just go with another classifier. If you want probability estimates I'd suggest logistic regression. Logistic regression also has the advantage of not needing probability calibration to output 'proper' probabilities.

Edit:

I did not know about linearSVC complexity, finally I found information in the user guide:

Also note that for the linear case, the algorithm used in LinearSVC by the liblinear implementation is much more efficient than its libsvm-based SVC counterpart and can scale almost linearly to millions of samples and/or features.

To get probability out of a linearSVC check out this link. It is just a couple links away from the probability calibration guide I linked above and contains a way to estimate probabilities.

Namely:

prob_pos = clf.decision_function(X_test)

prob_pos = (prob_pos - prob_pos.min()) / (prob_pos.max() - prob_pos.min())

Note the estimates will probably be poor without calibration, as illustrated in the link.