Google

developers.google.com › machine learning › linear regression

Linear regression | Machine Learning | Google for Developers

This module introduces linear regression, a statistical method used to predict a label value based on its features.

GeeksforGeeks

geeksforgeeks.org › machine learning › ml-linear-regression

Linear Regression in Machine learning - GeeksforGeeks

Linear Regression is a fundamental supervised learning algorithm used to model the relationship between a dependent variable and one or more independent variables.

Published 3 weeks ago

Linear Regression for newbies : r/learnmachinelearning

Welcome to r/learnmachinelearning - a community of learners and educators passionate about machine learning! This is your space to ask questions, share resources, and grow together in understanding ML concepts - from basic principles to advanced techniques. Whether you're writing your first ... More on reddit.com

[D] Why does almost no one talk about how linear regression is implemented in practice?

I think it’s just because it is easier to explain gradient descent with a trivial linear regression example. More on reddit.com

Some of you say “linear regression” like it’s a bad word

Mixed models are a secret weapon More on reddit.com

How do you use Linear Regression ?

Linear regression is a valuable tool, but it's only one of the tools in your toolbox. (Other basic tools are things like XMAs - XMAs of everything, of prices, returns, vol, and even histograms - because things change. Moving up the complexity ladder you have clustering, PCA analysis, RFs, ANNs, MCMC, etc.) You need to understand LR's strengths and weaknesses. It's well-known for example that certain problems, like the XOR problem, are not linearly separable. And so LR, a linear method, won't be able to solve that problem - whereas a multi-layer NN with non-linear activation functions will be able to, as will a RF. On the other hand, LR is very fast to compute, and while you lose on accuracy, you on balance probably gain on robustness. LR models also typically extrapolate more robustly than RF and ANN models - where you would be very careful about allowing the input to take on values outside of the historical range. One huge advantage of LR models is the associated R2 metric, which has a really intuitive and appealing interpretation. Namely, it tells you the proportion of variance in the output variable accounted for by the variance in the input variable. So if R2 is 10%, that means that 10% of the variation in the output - the thing you care about - is "explained" by the variations in the input. Let's take a step back and consider how you even measure if a strategy is good. The really naive way is to run a so-called backtest. Which I'm sure you already know about. But in my experience, that's not how the pros do it. Instead, they'll measure how good a model is by regressing the value of the indicator (let's say 1 means buy, 0 means hold and -1 means sell) against future returns, and you'll typically hope that your model will explain a few percent of the variation in the future returns. (The future returns are called the labels from ML context where the input and output are typically images and textual labels - "person on bike", that kind of thing. But in finance the labels are almost always future returns over some time period.) If your R2 is higher than, say, 10% - then you're kind of kicking ass - and you can be very sure that even a simple trading strategy will work. By the way, that simple strategy will make use of the LR insofar as it will - in the simplified single variable case - multiply the output of your indicator with "slope" in your LR to get as output your expected future return. And now you can say, is that return higher than my commissions? If yes, then if expected return is positive, then buy, else sell. Pushing the boat, let's say you have two or even three models which you, from the above analysis, you think are promising. How do you combine them? Again for simplicity assume each takes on a value in the closed range [-1, 1]. The answer is again LR. Now you're going to take the output signal from each model as the _input_ to another regression, again using the same labels - the future returns. And this will tell you whether the models cooperate - or compete - with each other. And you will know this by seeing if combined you get an improvement in R2. You can also inspect the slopes. You might even regress the parameters of a model, with the parameters of the same model but trained on month more of data. If your model is any good, the parameters should have some kind of stability over time. So your prior months params should predict current params. And finally it's good smoke test for more complicated models. If you find your complicated RF model is doing worse than LR, then you know you need to go back to the drawing board. More on reddit.com

Videos

10:32

Linear Regression Algorithm Tutorial | Linear Regression Explained ...

03:52:06

Linear Regression Algorithm | Linear Regression Machine Learning ...

28:49

Linear Regression In Machine Learning | ML Algorithms | Machine ...

28:36

Linear Regression Algorithm | Linear Regression in Python | Machine ...

26:53

Linear Regression Algorithm In Python From Scratch [Machine Learning ...

37:06

Linear Regression vs Logistic Regression | Machine learning ...

statistical approach for modeling the relationship between a scalar dependent variable and one or more explanatory variables

Wikipedia

en.wikipedia.org › wiki › Linear_regression

Linear regression - Wikipedia

2 weeks ago - Linear regression is also a type of machine learning algorithm, more specifically a supervised algorithm, that learns from the labelled datasets and maps the data points to the most optimized linear functions that can be used for prediction on new datasets.

W3Schools

w3schools.com › python › python_ml_linear_regression.asp

Python Machine Learning Linear Regression

In Machine Learning, and in statistical modeling, that relationship is used to predict the outcome of future events. Linear regression uses the relationship between the data-points to draw a straight line through all them.

ArcGIS Pro

pro.arcgis.com › en › pro-app › latest › tool-reference › geoai › how-linear-regression-works.htm

How Linear regression algorithm works—ArcGIS Pro | Documentation

Linear regression is a supervised machine learning method that is used by the Train Using AutoML tool and finds a linear equation that best describes the correlation of the explanatory variables with the dependent variable. This is achieved by fitting a line to the data using least squares.

Medium

medium.com › @charan.sandaka5 › linear-regression-algorithm-in-machine-learning-2c26f2f928ea

Linear Regression Algorithm in Machine Learning | by Charan Sandaka | Medium

November 9, 2025 - Serves as a foundation for advanced algorithms. Assumes linear relationships only. Sensitive to outliers. Poor performance on complex, non-linear data. Struggles with multicollinearity. Whether solved through OLS for precision or Gradient Descent for scalability Linear Regression teaches us the essence of all machine learning finding patterns in data to make predictions.

TutorialsPoint

tutorialspoint.com › machine_learning › machine_learning_linear_regression.htm

Linear Regression in Machine Learning

In machine learning, linear regression is used for predicting continuous numeric values based on learned linear relation for new and unseen data.

ML Glossary

ml-cheatsheet.readthedocs.io › en › latest › linear_regression.html

Linear Regression — ML Glossary documentation

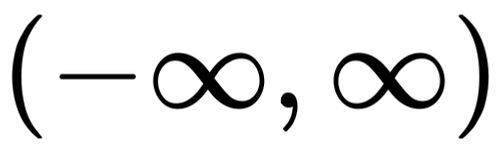

Linear Regression is a supervised machine learning algorithm where the predicted output is continuous and has a constant slope. It’s used to predict values within a continuous range, (e.g. sales, price) rather than trying to classify them into categories (e.g.

Medium

medium.com › @mahnoorsalman96 › linear-regression-for-machine-learning-a-practical-approach-84e447afa188

Linear Regression for Machine Learning: A Practical Approach | by Mahnoor Salman | Medium

January 23, 2024 - These Python code snippets use common libraries like scikit-learn and NumPy to calculate regression metrics. Make sure to replace y_true and y_pred with your actual and predicted values, respectively, before running the code. In conclusion, linear regression, in its simplicity and complexity, remains a cornerstone in the field of machine learning for data analysts.

Masters in Data Science

mastersindatascience.org › home › learning › machine learning algorithms › linear regression

What Is Linear Regression? | Master's in Data Science

December 15, 2023 - Linear regression is a type of supervised learning algorithm in which the data scientist trains the algorithm using a set of training data with correct outputs. You continue to refine the algorithm until it returns results that meet your expectations.

Online Manipal

onlinemanipal.com › home › blogs › 10 popular regression algorithms in machine learning

10 Popular Regression Algorithms in Machine Learning

November 28, 2025 - Ridge Regression is another popularly used linear regression algorithm in Machine Learning. If only one independent variable is being used to predict the output, it will be termed as a linear regression ML algorithm. ML experts prefer Ridge regression as it minimizes the loss encountered in linear regression (discussed above).

AWS

aws.amazon.com › what is cloud computing? › cloud computing concepts hub › analytics › artificial intelligence

What is Linear Regression? - Linear Regression Model Explained - AWS

1 week ago - Identify the linear regression equation as y=3*x+2. ... In machine learning, computer programs called algorithms analyze large datasets and work backward from that data to calculate the linear regression equation. Data scientists first train the algorithm on known or labeled datasets and then ...