algorithm - What is the time complexity of the merge step of mergesort? - Stack Overflow

Understanding merge sort Big O complexity

Trying to understand the time complexity of merge sort issue

My Merge Sort gives me a stack overflow

Videos

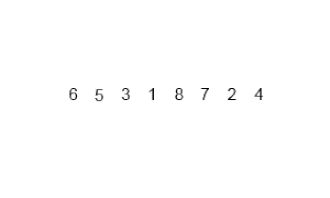

I'm going to be referring to this image. So the Big O of merge sort is nlogn.

So in the example I posted above, n = 8 so the Big O should be 8log(8) = 16. I think it's because in the first green level, we go through 8 items then merge and we do the same thing for the second green level so 8+8 = 16. But then I thought when we split the initial array(the purple steps) doesn't that add to the time complexity as well?

The "split" step is the one that takes o(logn), and the merge one is o(n), just realized that via a comment.

The split step of Merge Sort will take O(n) instead of O(log(n)). If we have the runtime function of split step:

T(n) = 2T(n/2) + O(1)

with T(n) is the runtime for input size n, 2 is the number of new problems and n/2 is the size of each new problem, O(1) is the constant time to split an array in half.

We also has the base case: T(4) = O(1) and T(3) = O(1) .

We might come up with (not really accurate):

T(n) = n/2 * O(1) = O(n/2) = O(n)

Moreover, to understand the time complexity of Merge step (finger algorithm), we should understand the number of sub-array.

The number of sub-array has the asymptotic growth rate at the worst case = O(n/2 + 1) = O(n).

The "Finger Algorithm" grow linear with the growth of number of sub-array, it will loop through each sub-array O(n), and at each sub-array at the worst case it will need to loop 2 more times -> the time complexity of merge step (finger algorithm) = O(2n) = O(n).