"What is the complexity of Merge Sort?"

Understanding merge sort Big O complexity

algorithm - Best Case For Merge Sort - Stack Overflow

algorithm - Why is merge sort worst case run time O (n log n)? - Stack Overflow

Videos

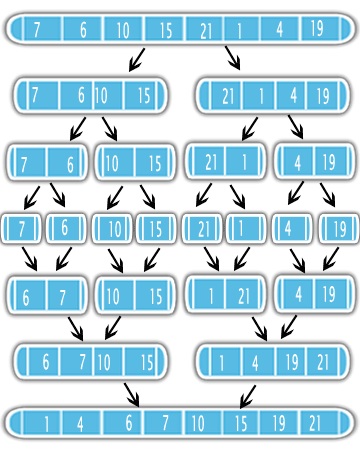

I'm going to be referring to this image. So the Big O of merge sort is nlogn.

So in the example I posted above, n = 8 so the Big O should be 8log(8) = 16. I think it's because in the first green level, we go through 8 items then merge and we do the same thing for the second green level so 8+8 = 16. But then I thought when we split the initial array(the purple steps) doesn't that add to the time complexity as well?

The Merge Sort use the Divide-and-Conquer approach to solve the sorting problem. First, it divides the input in half using recursion. After dividing, it sort the halfs and merge them into one sorted output. See the figure

It means that is better to sort half of your problem first and do a simple merge subroutine. So it is important to know the complexity of the merge subroutine and how many times it will be called in the recursion.

The pseudo-code for the merge sort is really simple.

# C = output [length = N]

# A 1st sorted half [N/2]

# B 2nd sorted half [N/2]

i = j = 1

for k = 1 to n

if A[i] < B[j]

C[k] = A[i]

i++

else

C[k] = B[j]

j++

It is easy to see that in every loop you will have 4 operations: k++, i++ or j++, the if statement and the attribution C = A|B. So you will have less or equal to 4N + 2 operations giving a O(N) complexity. For the sake of the proof 4N + 2 will be treated as 6N, since is true for N = 1 (4N +2 <= 6N).

So assume you have an input with N elements and assume N is a power of 2. At every level you have two times more subproblems with an input with half elements from the previous input. This means that at the the level j = 0, 1, 2, ..., lgN there will be 2^j subproblems with an input of length N / 2^j. The number of operations at each level j will be less or equal to

2^j * 6(N / 2^j) = 6N

Observe that it doens't matter the level you will always have less or equal 6N operations.

Since there are lgN + 1 levels, the complexity will be

O(6N * (lgN + 1)) = O(6N*lgN + 6N) = O(n lgN)

References:

- Coursera course Algorithms: Design and Analysis, Part 1

On a "traditional" merge sort, each pass through the data doubles the size of the sorted subsections. After the first pass, the file will be sorted into sections of length two. After the second pass, length four. Then eight, sixteen, etc. up to the size of the file.

It's necessary to keep doubling the size of the sorted sections until there's one section comprising the whole file. It will take lg(N) doublings of the section size to reach the file size, and each pass of the data will take time proportional to the number of records.