c++ - Is there any "standard" way to calculate the numerical gradient? - Stack Overflow

vector analysis - How do we calculate the gradient from numerical data - Mathematics Stack Exchange

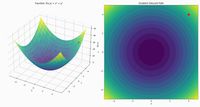

Simple numerical differentiation

Autograd function with numerical gradients

Videos

In the first place, you should use the central difference scheme, which is more accurate (by cancellation of one more term of the Taylor develoment).

(f(x + h) - f(x - h)) / 2h

rather than

(f(x + h) - f(x)) / h

Then the choice of h is critical and using a fixed constant is the worst thing you can do. Because for small x, h will be too large so that the approximation formula no more works, and for large x, h will be too small, resulting in severe truncation error.

A much better choice is to take a relative value, h = x√ε, where ε is the machine epsilon (1 ulp), which gives a good tradeoff.

(f(x(1 + √ε)) - f(x(1 - √ε))) / 2x√ε

Beware that when x = 0, a relative value cannot work and you need to fall back to a constant. But then, nothing tells you which to use !

You need to consider the precision needed.

At first glance, since |y| = 5.49756e14 and epsi = 1e-4, you need at least ⌈log2(5.49756e14)-log2(1e-4)⌉ = 63 bits of significand precision (that is the number of bits used to encode the digits of your number, also known as mantissa) for y and y+epsi to be considered different.

The double-precision floating-point format only has 53 bits of significand precision (assuming it is 8 bytes). So, currently, f1, f2 and f3 are exactly the same because y, y+epsi and y-epsi are equal.

Now, let's consider the limit : y = 1e20, and the result of your function, 10x^3 + y^3. Let's ignore x for now, so let's take f = y^3. Now we can calculate the precision needed for f(y) and f(y+epsi) to be different : f(y) = 1e60 and f(epsi) = 1e-12. This gives a minimum significand precision of ⌈log2(1e60)-log2(1e-12)⌉ = 240 bits.

Even if you were to use the long double type, assuming it is 16 bytes, your results would not differ : f1, f2 and f3 would still be equal, even though y and y+epsi would not.

If we take x into account, the maximum value of f would be 11e60 (with x = y = 1e20). So the upper limit on precision is ⌈log2(11e60)-log2(1e-12)⌉ = 243 bits, or at least 31 bytes.

One way to solve your problem is to use another type, maybe a bignum used as fixed-point.

Another way is to rethink your problem and deal with it differently. Ultimately, what you want is f1 - f2. You can try to decompose f(y+epsi). Again, if you ignore x, f(y+epsi) = (y+epsi)^3 = y^3 + 3*y^2*epsi + 3*y*epsi^2 + epsi^3. So f(y+epsi) - f(y) = 3*y^2*epsi + 3*y*epsi^2 + epsi^3.

In an optimization problem where my dynamics are some unknown function I can't compute a gradient function for, are there more efficient methods of approximating gradients than directly estimating with a finite difference?